On this page

There is a quiet assumption embedded in most AI agent implementations: that your data, your model calls, and your infrastructure telemetry are acceptable collateral for someone else's managed service. You fire a request at a cloud endpoint, the agent reasons, tools execute, traces land in a hosted observability dashboard, and memory persists in a managed store. Convenient. Fast to prototype. And a structural problem if you are building AI infrastructure for a sovereign context.

The question that reframes everything is not "which managed service is easiest?" It is: what crosses the border, and what stays inside it?

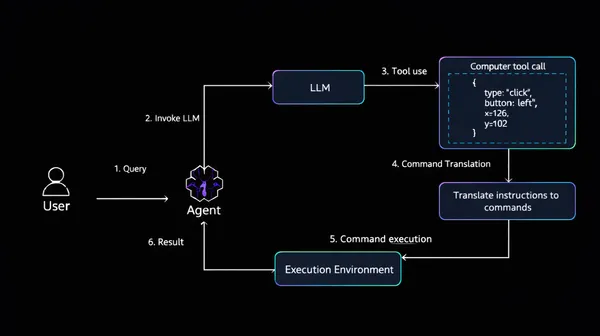

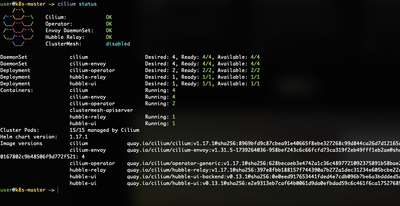

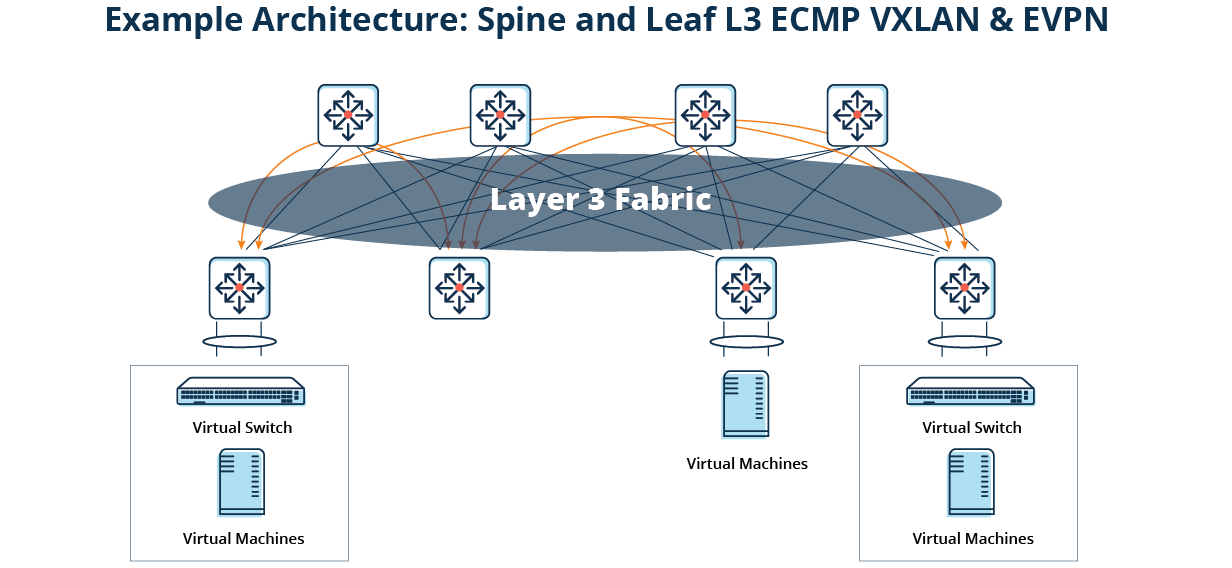

Model weights, inference compute, session memory, telemetry, tool execution, in a sovereign AI deployment, every component needs an explicit answer to the question of data residency. This post documents a complete, working implementation of a production-grade AI agent stack built on open-source components, running on Kubernetes, with AI inference running entirely on-premises. Where external data retrieval is needed. Web search in this implementation, that boundary is explicit, controlled, and replaceable. The goal is not to demonstrate that open-source can approximate managed cloud services. It is to show that the core of a sovereign agent stack: the model, the reasoning, the memory, the telemetry, can run entirely within a perimeter you control.